Implicator PRO Briefing / 24 Mar 2026

Most AI strategies built between 2023 and 2024 rest on a single bet: that access to a powerful model is, by itself, a competitive advantage. That bet is losing. Token prices have cratered 99% in three years. Six providers sell comparable intelligence at what amounts to commodity pricing. Open-source alternatives undercut them all. The model moat, the thing everyone spent two years chasing, is gone.

What replaces it is not obvious from the headlines. The companies building durable positions in Q1 2026 are not training better models. They are wiring the plumbing around the models: orchestration layers, data flywheels, workflow hooks, compliance scaffolding, inference-routing engines. Five categories, each with its own switching costs and margin structure. This analysis maps those migration lanes using current earnings data, more than $11 billion in Q1 M&A, active venture rounds, and the EU AI Act's August enforcement deadline. If you are allocating capital, talent, or strategy around AI this year, what follows is the operating map.

Here is a story that matters more than it should. In 1881, a cotton mill in Newberry, South Carolina became the first American factory to replace its central steam engine with an electric motor. Better economics, less maintenance, no soot on the walls. What the mill's owners did next, though, would haunt American industry for a generation. They bolted the new motor right where the steam engine had sat. Same overhead shafts. Same leather belts. Same floor plan. The factory ran as if nothing had changed, and productivity barely moved.

Nearly four decades passed before manufacturers figured out the real lesson. Electricity's value had nothing to do with generating power. The winners of the electric age tore their factories apart and rebuilt them around the new energy source. Out went the central-shaft architecture. In came distributed motors at each workstation, single-story plants with natural lighting, assembly lines that steam could never have supported. The generator was a commodity almost from the start. The money was in the switchboard, the wiring diagram, the reorganized factory floor.

Paul David, the Stanford economist, documented this lag in his landmark 1990 paper "The Dynamo and the Computer." Twenty-five to forty years for electricity's full productivity impact to show up. Not because the technology was immature. Because companies kept plugging new power into old architecture. In 2026, the parallel is no longer theoretical. The San Francisco Federal Reserve cited David's framework in a February 2026 Economic Letter, putting AI adoption and electrification on a side-by-side timeline. The European Central Bank invoked Erik Brynjolfsson's "Productivity J-Curve" in a March 2026 speech. An institutional consensus is hardening: we are in the switchboard phase of AI. The companies still optimizing their generators are about to find out they are in the wrong business.

The generator, in this analogy, is the large language model itself. And the intelligence it produces has become, for all practical purposes, a commodity input.

Key Numbers

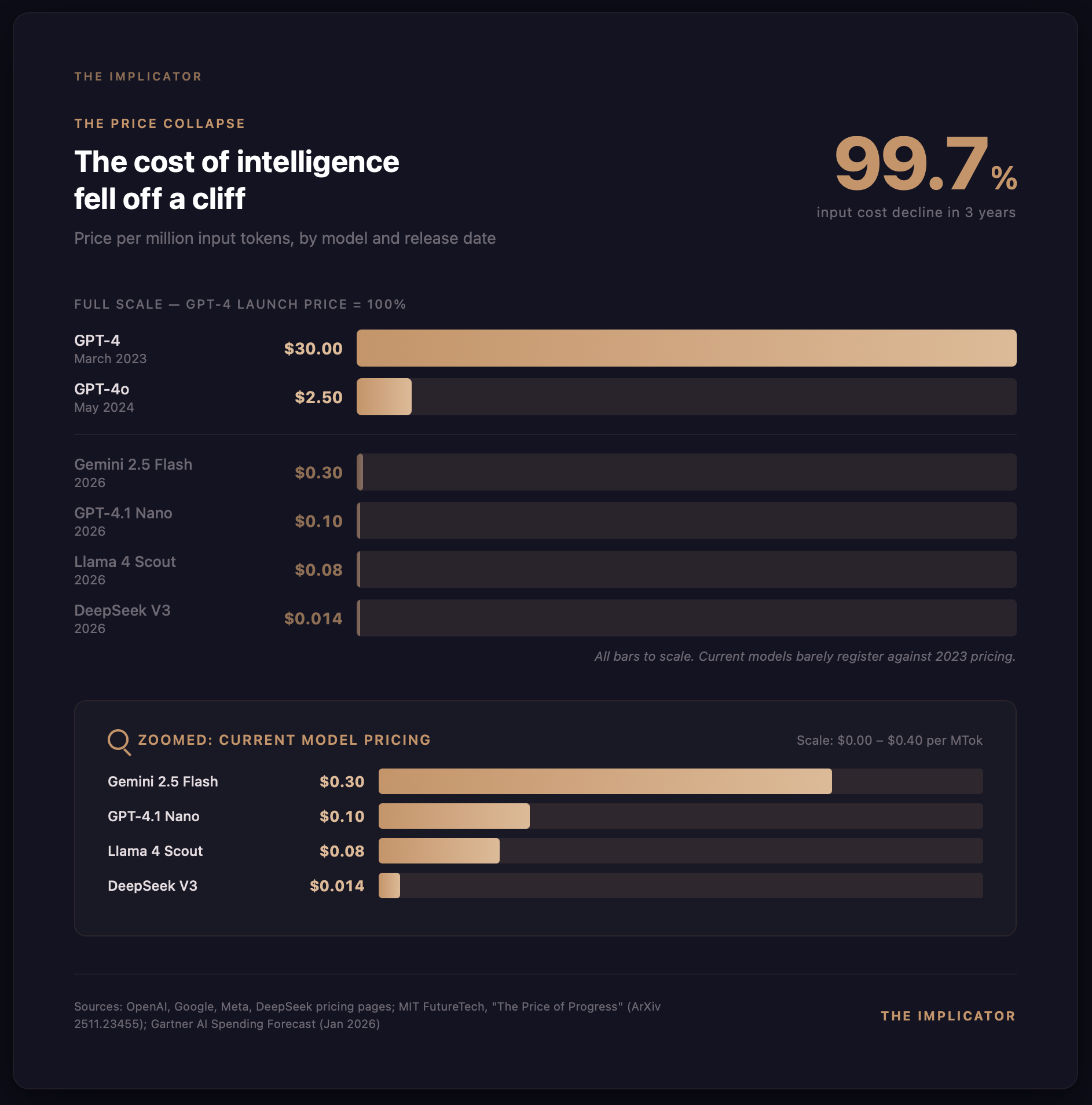

- 99.7% decline in token input pricing from GPT-4 (March 2023) to GPT-4.1 Nano (2026), from $30 to $0.10 per million tokens

- $650 billion committed to AI infrastructure by hyperscalers in 2026, per Bloomberg, Goldman Sachs, and Moody's estimates

- Five migration lanes where defensible value is forming: orchestration, data flywheels, workflow integration, compliance, and inference economics

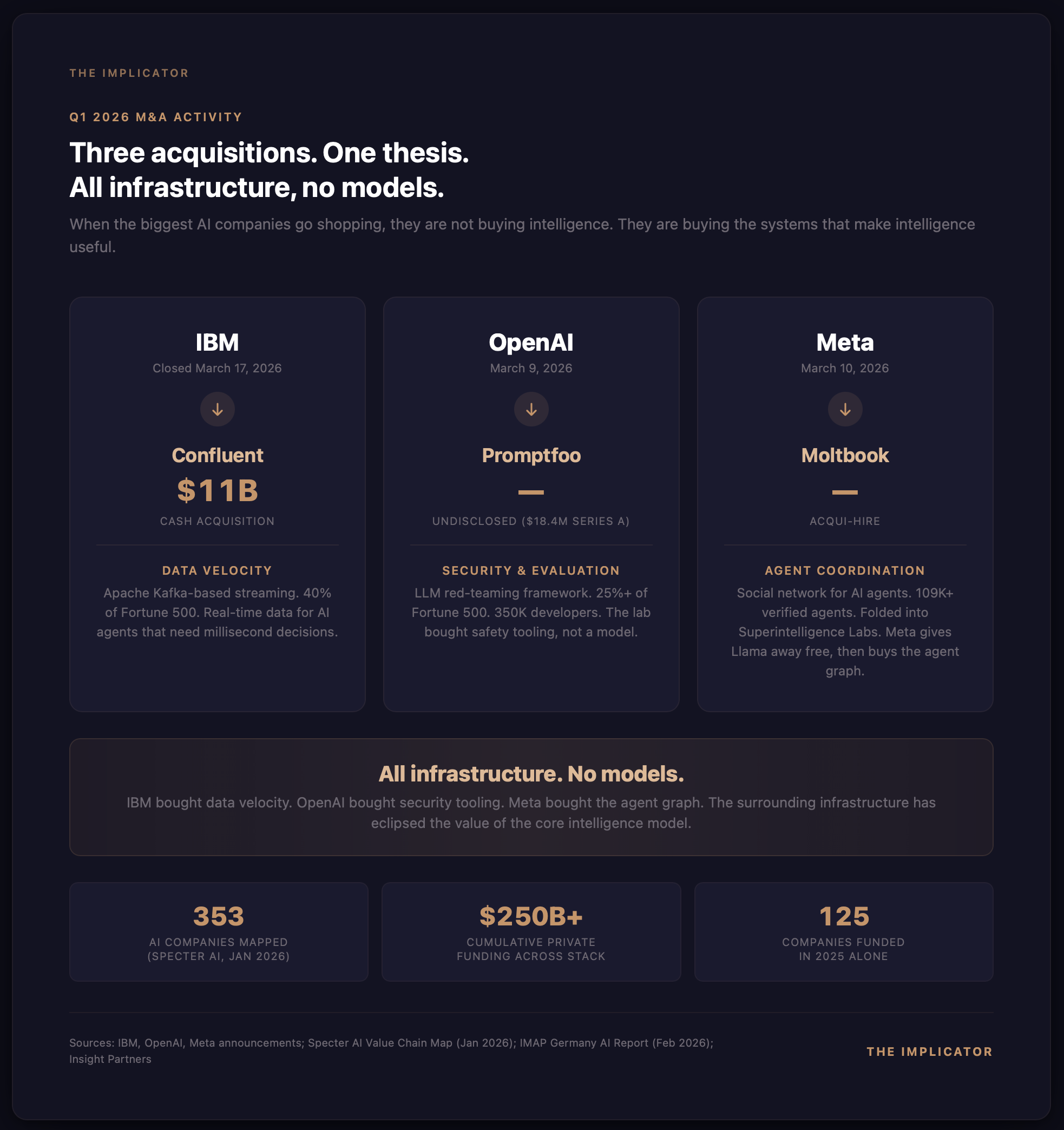

- $11 billion+ in Q1 2026 M&A targeting infrastructure (IBM-Confluent, OpenAI-Promptfoo, Meta-Moltbook), none targeting model companies

The price of thinking fell off a cliff

Here is what happened to the price of thinking. GPT-4 launched on March 14, 2023, charging $30 per million input tokens and $60 per million output tokens. Today, GPT-4.1 Nano charges ten cents on input, forty cents on output, a decline of 99.7% and 99.3% respectively. That enterprise chatbot your team budgeted eight to ten thousand a month to run in 2023 now costs maybe $50 to $150 on a model that, for most commercial work, performs about as well as the old one did.

And it is not an OpenAI-specific story. Google's Gemini 2.5 Flash: $0.30 per million input tokens. DeepSeek's V3, the Chinese open-source model that rattled Silicon Valley in January 2025: $0.014 per million tokens, roughly two thousand times cheaper than GPT-4 at launch. Meta's Llama 4 Scout, running through third-party inference providers: $0.08 per million input tokens. Self-hosting an open-weight model of near-frontier quality now costs less per query than a gas station coffee.

Hans Gundlach, Jayson Lynch, Matthias Mertens, and Neil Thompson at MIT FutureTech put numbers on the mechanism in a paper presented at NeurIPS 2025. Inference costs are declining five to ten times per year, driven by hardware improvements, algorithmic efficiency gains of roughly 3x annually, and pricing competition that borders on irrational. A follow-up paper from the same group, published February 2026, found that the compute required to hit a specific benchmark score dropped by a factor of 50 among leading developers in under two years. Intelligence is not scarce. It is abundant, getting cheaper by the quarter, and available from a half-dozen providers at commodity pricing.

Aaron Levie, CEO of Box, told Business Insider in 2025 that token costs would fall to "near zero" by 2026. On the Latent Space podcast, he pushed harder: "In ten years from now, we'll have infinite context windows at a thousandth of the price of today." The market has largely vindicated his timeline. His exact figures, maybe not. But the direction was dead right.

By Gartner's January forecast, global AI spending will hit $2.52 trillion in 2026, up 44% in a single year. But total spending is not the interesting question. The interesting question is who keeps the margin.

The HALO trade and its blind spot

Wall Street thinks it has figured this out. In February 2026, Josh Brown of Ritholtz Wealth Management coined the "HALO" trade: Heavy Assets, Low Obsolescence. Simple thesis. In an AI economy, the scarce resources are physical. Data centers. Power generation. Semiconductor fabs. The land they sit on. Software is abundant and depreciating. Hardware is constrained and appreciating.

It spread fast. Goldman Sachs published "The HALO Effect: Heavy Assets, Low Obsolescence in the AI Era" on February 24, with analysts Guillaume Jaisson and Ben Snider documenting a 35% outperformance of capital-intensive stocks over capital-light stocks since January 2025. Goldman called it a "repricing of scarcity." Morgan Stanley's trading desk said the shift was "structural rather than tactical." Within weeks, HALO had become shorthand on institutional floors for a single conviction: AI's value accrues to atoms, not bits.

HALO is not wrong, exactly. Hyperscalers have committed an estimated $650 billion to AI infrastructure in 2026, a number confirmed across Bloomberg, Goldman Sachs, and Moody's estimates. Physical constraints are real. But HALO has a blind spot the size of the entire software stack.

HALO assumes a clean binary, hardware up, software down, and in doing so it ignores an entire middle layer sitting between the commodity model and the enterprise customer. Orchestration, integration, compliance, inference optimization. All of it. That is where the switchboard companies are being built right now. And Q1 2026 evidence suggests this layer, not the model layer and not only the hardware layer, is where the most defensible enterprise value is concentrating.

Five lanes carry this migration, and understanding them is, at this point, the difference between investing in generators and investing in the factory redesign.

Lane 1: The orchestration layer

On November 25, 2024, Anthropic open-sourced the Model Context Protocol, a standard for connecting AI models to external tools and data sources. Almost nobody noticed. Then, over the next sixteen months, MCP quietly became the connective tissue of the AI industry, growing to more than 10,000 active public servers and 97 million monthly SDK downloads. OpenAI adopted it in March 2025. "People love MCP," Sam Altman said, "and we are excited to add support across our products." Microsoft announced Windows 11 MCP integration at Build 2025. Google launched fully managed remote MCP servers in December 2025, wiring up BigQuery, Maps, Compute Engine, and GKE. By December 9, the Linux Foundation had taken MCP as a donation and established the Agentic AI Foundation with Anthropic, Block, and OpenAI as co-founders. Platinum members: AWS, Bloomberg, Cloudflare, Google, Microsoft.

Anthropic's own trajectory is the orchestration pivot in miniature. Claude Code went from research preview in February 2025 to general availability by May. By February 2026, the company reported $2.5 billion in annualized revenue, with business subscriptions quadrupling since January and enterprise customers now representing more than half the top line. Total run rate climbed to $14 billion. Then Cowork arrived, research preview on January 30, enterprise launch February 24, instantly plugging into Google Workspace, DocuSign, Apollo, Clay, FactSet, Harvey, WordPress. Spotify, Thomson Reuters, the New York Stock Exchange, and Epic were among the first enterprise customers. Within two weeks, Microsoft had launched "Copilot Cowork" powered by Claude at $30 per user per month, piping Anthropic's agent infrastructure straight into the Microsoft 365 ecosystem.

The pattern is hard to miss. Anthropic is not competing primarily on model quality anymore. It is building the orchestration layer that makes AI models useful inside enterprise workflows, then licensing that layer to the largest distribution platforms on Earth.

It is not alone. On March 9, Dataiku launched its "Platform for AI Success" with three new products: Agent Management, Cobuild, Reasoning Systems. SiliconANGLE was blunt about what it meant: Dataiku is evolving into "the orchestration layer for enterprise-grade AI agents." Valued at $3.7 billion, ARR north of $350 million, the company has hired Morgan Stanley and Citigroup for a potential US IPO in the first half of 2026.

Then NVIDIA showed up. Then NVIDIA did something nobody expected. The company that built its AI dominance on proprietary CUDA lock-in shipped an open-source Agent Toolkit at GTC 2026, packaging OpenShell for security, AI-Q for agentic search, and the Nemotron model family for inference. A dozen enterprise heavyweights, Salesforce, ServiceNow, Atlassian, Adobe, SAP among them, joined at launch.

Venture capital is voting accordingly. LangChain, the developer framework for building LLM-powered apps, raised $125 million at a $1.25 billion valuation in October 2025; 35% of the Fortune 500 use its services. CrewAI, which orchestrates multi-agent workflows, pulled in $18 million from Insight Partners and processes 450 million agents per month for PwC, IBM, and Capgemini. Microsoft retired its AutoGen research framework in October 2025, swapping in the production-grade Agent Framework to unify and govern enterprise AI agents.

But the most telling number belongs to Salesforce. Agentforce, its AI agent platform, has closed more than 29,000 total deals through Q4 FY2026, with standalone ARR blowing past $540 million by Q3. Analysts project $800 million by year-end, and Marc Benioff has called it the fastest-growing product in the company's history. More than 3.2 trillion tokens processed so far. And here is what makes Agentforce instructive for this thesis: the model powering it is, in competitive terms, interchangeable. The orchestration layer around it is not.

Lane 2: Proprietary data flywheels

If orchestration is the wiring diagram, proprietary data is the factory floor itself, the one no competitor can clone. The cleanest example right now is Glean.

Glean has been at this since 2019. The company builds what amounts to an enterprise knowledge graph, one that hooks into internal apps and pulls documents, messages, tickets, conversations from dozens of platforms, all while preserving each system's permissions structure. What creates the moat is not the search algorithm. It is the graph underneath, growing more accurate with every query, every click, every correction an employee makes. The data feeds on itself, and nobody outside the company has a copy.

Follow the valuation trajectory to understand what investors are actually buying. Glean's valuation climbed from $1 billion in May 2022 to $2.2 billion by February 2024, then $4.6 billion by that September. When Wellington Management led a $150 million Series F in June 2025, the price tag had reached $7.2 billion. Revenue followed a similar arc. ARR crossed $100 million in February 2025, in under three years, and doubled to $200 million by year's end. More than 100 million agent actions annually. Investors are not paying 36x revenue for a search product. They are paying for the graph underneath it.

Here is why Glean matters to this thesis. Its knowledge graph is built from accumulated interaction data across every employee in the customer's organization. Walk away from Glean and you are not just replacing a search tool. You are abandoning the organizational memory the graph has constructed. No competitor can import it. Cannot be exported. That flywheel is the moat.

Jaya Gupta and Ashu Garg at Foundation Capital formalized the idea in a December 2025 essay they called "The Context Graph." Their argument: persisting decision traces, the record of which information was retrieved, how it was used, what decisions it supported, represents a trillion-dollar opportunity. In a separate piece on December 30, Garg issued a warning: "In 2026, I expect the incumbents to assert that control more aggressively. Some of it will be framed as security and privacy, but the effect will be the same: tighter access, more friction, more rules."

Garg barely had to wait. That same June, Salesforce changed Slack's API terms to stop third-party apps from indexing, copying, or permanently storing Slack messages. Glean got hit directly. It told customers that the changes "will obstruct your ability to use your data with your chosen enterprise AI platform." The platform wars for enterprise data have started, and companies with deep, proprietary data integrations now find themselves both the most valuable acquisition targets and the most exposed to platform lock-in.

Lane 3: Integration and workflow moats

Lane three is subtler than orchestration or data, and its switching costs may be the highest of all. When AI gets embedded into the daily operational fabric of a business, handling customer communications, managing freight bids, triaging support tickets, coordinating across Slack and email and phone, the lock-in that forms is not contractual. It is behavioral. Pulling the system out means rebuilding how the company actually runs.

Take freight. GoodShip is a good example. Ryan Soskin, who came up through Coyote, Convoy, and Stord, co-founded the company in 2022 with David Tsai (ex-Amazon, Convoy). With $42.8 million raised across four rounds, the most recent a $25 million Series B last August, GoodShip's AI now handles freight procurement and orchestration for Tropicana, KeHe Distributors, and Kellanova. Revenue climbed tenfold in 2024. Spreadsheet-based freight management, gone. In its place: AI-driven bid optimization reporting 3 to 5% reductions in transportation spend and a 20% decrease in late shipments. The moat is not the model. GoodShip now sits in the daily operational flow of how these companies move physical goods. Good luck ripping that out.

Augment pushes the workflow moat further. Harish Abbott, co-founder of Deliverr (acquired by Shopify for $2.1 billion), built Augie, an AI product that handles the full order-to-cash lifecycle in logistics, managing emails, voice calls, Slack messages, SMS, Telegram, and TMS portal interactions autonomously. With $110 million in funding ($25 million seed from 8VC, $85 million Series A from Redpoint Ventures), Augment now supports more than $35 billion in freight under management for Armstrong Transport Group, Echo, Penske, and others. The numbers are hard to argue with: 40% reduction in invoice delays, 5%-plus gross margin recovery per load. But the moat is not in the numbers. Augie is not a tool that logistics companies use. It is a colleague they depend on, and replacing a colleague is not a software migration. It is organizational surgery.

Derek Ashmore of Asperitas, writing in InformationWeek, captured the architectural implication: the smart move is to treat low-level agent orchestration as a temporary advantage, not a permanent asset. He advises designing stacks so that companies can "swap in vendor innovations as they mature, while your real differentiation lives in the domain models, policies, and evaluation data that no platform vendor can ship."

Call it the abstraction layer thesis, and it cuts both ways. Companies that build an abstraction layer between their workflows and their AI models can swap providers when something better or cheaper shows up. Companies that hard-coded a specific model into their operational infrastructure? Prohibitive migration costs. Tobias Pfuetze, a fintech founder, documented the divergence on Medium: companies with proper abstraction layers tested DeepSeek on production workflows within hours of its release. Companies without them could not even begin the evaluation.

This risk is not theoretical. Builder.ai, once valued at $1.5 billion with backing from Microsoft, Qatar Investment Authority, and SoftBank, filed for insolvency on May 20, 2025 after Bloomberg reported revenues inflated by as much as 300%. Customers woke up to locked-out source code, inaccessible deployment systems, vanished business data. Competing platforms tried to help with emergency migrations. For most customers, it was too late. Industry data from CloudBees suggests that enterprises lose roughly $315,000 per platform migration, and more than half of IT leaders say they spend over $1 million a year on migrations.

What the lane three winners have figured out is how to be operationally indispensable while still letting customers swap the underlying model. Hard trick to pull off. But the moats it creates are immune to price cuts.

Three companies outside freight show the same pattern at scale. Harvey AI, valued at roughly $8 billion after a December 2025 Series F led by Andreessen Horowitz, has deployed custom-trained legal AI at Paul Weiss, A&O Shearman (more than 3,500 lawyers generated 40,000 queries during the trial), and Reed Smith. Bloomberg Law was direct about Harvey's edge: "early enthusiasm and the switching costs law firms would endure to change course." In sales, Gong ($584 million in funding, $7.25 billion valuation, roughly $300 million ARR) captures 100x more conversational data than a traditional CRM. Sixty-seven percent of users log in five-plus days per week. Multi-year contracts carry 50 to 100% early termination penalties; implementations run three to six months at $50,000 to $150,000. Then there is GitHub Copilot: 15 million users, inline completions, agent mode, PR review, MCP connections to CI/CD pipelines. A level of platform integration no standalone AI coding tool can touch.

Lane 4: Trust, compliance, and the regulatory moat

Technologists underestimate this lane more than any other. Enterprise buyers do not. Trust and compliance infrastructure, the layer that makes AI systems auditable, explainable, and legally defensible, is turning into one of the most capital-efficient moats in the AI economy.

Start with the regulatory terrain. The EU AI Act's high-risk requirements become enforceable August 2, 2026. Companies deploying AI in healthcare, financial services, employment, education, law enforcement, or critical infrastructure must implement risk management systems, data governance frameworks, technical documentation, automatic logging, transparency provisions, human oversight mechanisms, conformity assessment with CE marking. That is not a wish list. It is a legal obligation. Penalties run to EUR 35 million or 7% of global annual turnover for prohibited practices, EUR 15 million or 3% for high-risk violations, numbers large enough to get a CFO's attention without any help from the press. By the German Federal Statistical Office's estimate, compliance runs about EUR 52,000 per year per high-risk AI model, and the bill adds up fast when a mid-market enterprise has dozens of models deployed across the organization.

Vanta has quietly become the compliance default for fast-growing tech companies. Wellington Management led a July 2025 Series D that pushed the valuation to $4.15 billion. The platform automates evidence collection and continuous monitoring across more than 35 frameworks, everything from SOC 2 and HIPAA to ISO 27001 and the EU AI Act's new companion standard ISO 42001. Mistral AI, Duolingo, and Ramp are customers. Something like 75% of Y Combinator companies use the platform, and its AI-powered questionnaire automation hits an 80% immediate acceptance rate. With $504 million in total funding from Goldman Sachs Alternatives, Sequoia, JPMorgan Chase, and CrowdStrike Ventures, Vanta is estimated at around $220 million in ARR.

What makes Vanta sticky is not a contract but architecture. More than 200 in-house connectors and 100 third-party systems, all continuously pulling compliance evidence from a customer's tech stack. Try walking away from that. You would need to re-establish every single integration, re-collect years of historical evidence, rebuild the monitoring pipeline from nothing. That takes six to nine months on a good day, with compliance gaps during the transition that could trigger the very audit failures the tool was supposed to prevent. For a company that built its audit posture around Vanta, switching is not a software decision. It is a regulatory risk decision.

Drata, Vanta's closest rival, has reached a $2 billion valuation with roughly $100 million in ARR and 7,000 customers, Notion and OpenAI among them. It acquired three companies in 2024 and 2025 (oak9, Harmonize, SafeBase) to deepen coverage. The two platforms are converging on similar feature sets, but the market is large enough for both, and in compliance, whoever got there first has the advantage.

Healthcare is where the regulatory moat runs deepest. Viz.ai, which holds the landmark De Novo FDA authorization for the first autonomous AI stroke detection system along with subsequent 510(k) clearances for pulmonary embolism, brain hemorrhage, and subdural hemorrhage, has raised $252 million at a $1.2 billion valuation. It is now deployed across something like 1,800 to 2,000 hospitals covering 230 million patient lives, and replicating that clinical validation data would cost a competitor years and tens of millions of dollars. And here is the compounding effect that makes this lane so dangerous to ignore: each FDA clearance becomes a predicate device that speeds subsequent approvals, clinical validation data costs a fortune to replicate, and hospital workflow integration creates switching costs measured in years. Across the industry, the FDA has authorized more than 1,200 AI-enabled medical devices, with a record 295 clearances in 2025 alone.

In financial services, Behavox tells a version of the same story. The company monitors communications across 150 data types for the world's largest banks, processing voice, email, chat, and trading data for conduct surveillance and compliance. It grew ARR 44% in 2024, has been profitable since Q4 2023, and has never lost a customer, 100% retention. Its proprietary LLM, Quantum AI, runs with zero third-party dependencies, which is non-negotiable for financial institutions that cannot allow data to leave the building. Three of the ten largest global banks use it.

Governance tooling is starting to consolidate. Credo AI picked up Gartner Representative Vendor status for AI Governance (Mastercard is a customer, about $41 million in total funding). Snowflake's acquisition of TruEra in May 2024 sent a different kind of signal: standalone governance tools may get absorbed by bigger platforms rather than growing independently. Holistic AI, smaller at 43 employees, is carving out a position on EU regulatory compliance. Gartner projects the AI governance market will grow from $309 million in 2025 to roughly $4.8 billion by 2034, a 35.7% compound annual growth rate, which tells you something about how much compliance pain enterprises expect to absorb.

Here is the meta-point. Every additional regulation, every new compliance framework, every headline about AI bias or hallucination increases the value of the trust infrastructure layer. Companies that already embedded compliance tooling have a head start measured not in features but in audit history, regulatory relationships, institutional knowledge. These moats compound with time. That is what makes them dangerous to ignore.

Lane 5: Inference economics

The fifth lane is the most technical. For CFOs, it may be the most consequential. Intelligence itself is approaching zero cost. Deploying intelligence at scale is not. Inference, the computational process of generating each AI response, is becoming the dominant line item in enterprise AI budgets. The companies that can optimize it are building a new class of competitive advantage.

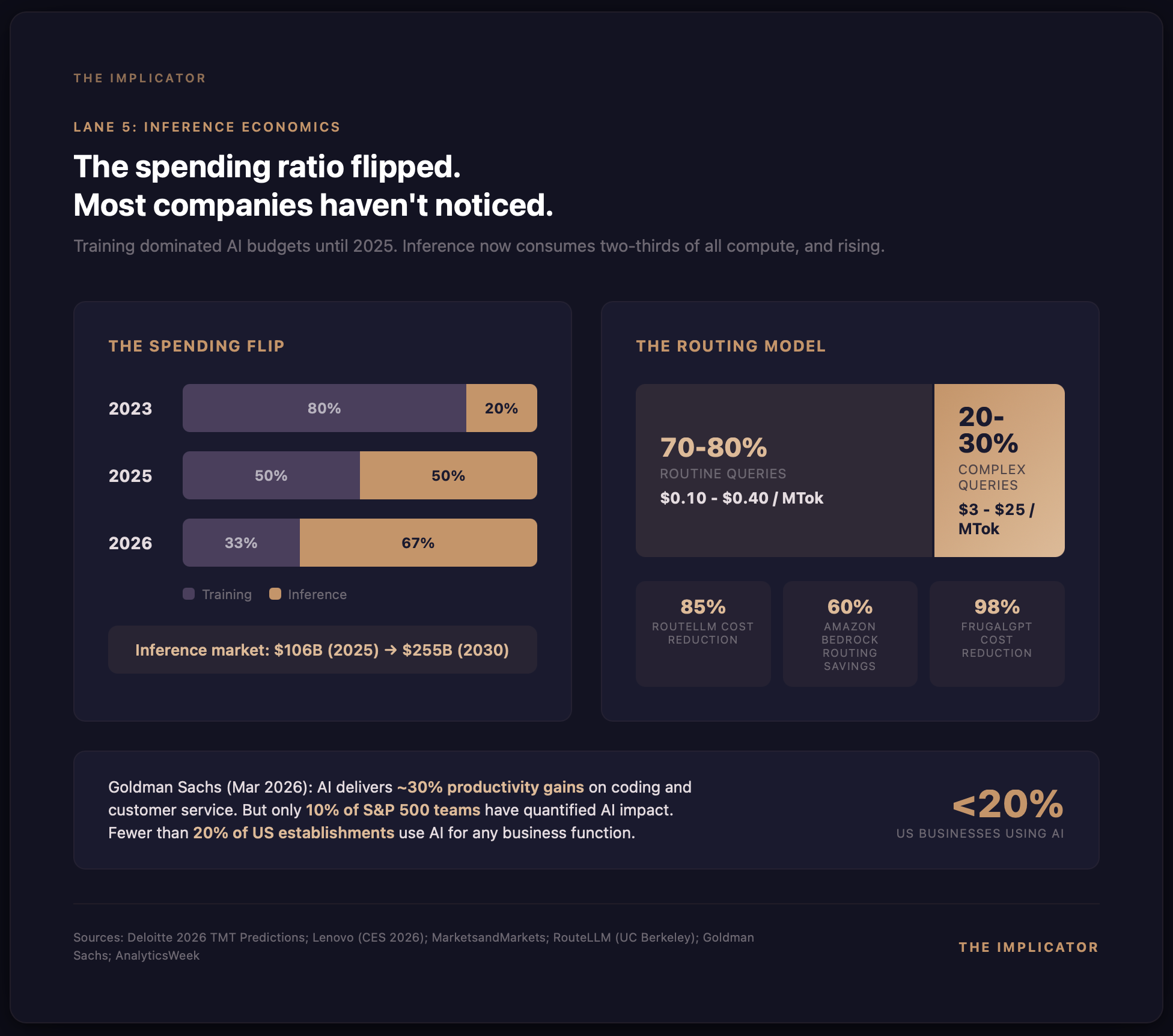

Lenovo CEO Yuanqing Yang made the shift concrete at CES 2026. Back in 2023, roughly 80% of AI compute spending went to training, just 20% to inference. "In the future," Yang told the audience, "those numbers are reversed." He is not guessing. Deloitte's 2026 TMT Predictions backs him up, showing inference climbing from a third of all AI compute in 2023 to half in 2025, with a projected two-thirds by 2026. By MarketsandMarkets' estimate, the inference market stands at $106 billion this year and should reach $255 billion by 2030. Most enterprises have not yet built the financial infrastructure to manage any of it.

AnalyticsWeek crystallized the anxiety in a March 2026 piece: "If an AI agent saves a customer service representative 15 minutes of work but costs $4.00 in inference tokens to run, the ROI is negative." The term they coined, "zombie agents," deserves to stick. It describes AI deployments that look impressive in demos but silently drain quarterly budgets through unmonitored token consumption. Their verdict: "The wow factor of AI has evaporated. In 2026, the Board of Directors wants to see the Efficiency Ratio."

The best weapon against inference bloat is intelligent routing, the practice of sending simple queries to cheap, fast models and routing complex ones to frontier models. RouteLLM, a project from UC Berkeley and Canva, demonstrated 85% cost reduction while maintaining 95% quality on benchmarks. Amazon Bedrock's intelligent prompt routing, which selects between Anthropic's Claude models based on query complexity, achieves up to 60% cost savings in enterprise testing. Stanford's FrugalGPT work showed you can match GPT-4 on targeted tasks while spending 98% less. And this is already shipping in production: Checkr replaced GPT-4 with a fine-tuned Llama-3-8b, cut costs by 5x, and got 30x faster inference. Not a benchmark. A deployed system.

A pattern is forming across enterprises. Most routine queries, perhaps 70 to 80% of traffic, things like classification, extraction, and basic Q&A, can be handled by models charging $0.10 to $0.40 per million tokens. The remaining 20 to 30%, queries that require genuine reasoning or multi-step analysis, go to frontier models at $3 to $25 per million tokens. Blended cost comes out to a fraction of what enterprises paid twelve months ago, but only for companies that built the routing infrastructure to match query complexity to model capability. That last clause is where the opportunity sits.

Model routers, token-level cost attribution tools, inference optimization platforms: a category that did not exist in 2024 is forming fast. LeanLM, MindStudio, Swfte AI are among the emerging players. The big cloud providers are embedding routing natively, Amazon Bedrock, Google Vertex AI, Azure AI all offer some form of intelligent model selection. But enterprise-grade routing, the kind with cost attribution by department, automated quality monitoring, policy-based rules, remains a greenfield opportunity.

Goldman Sachs research from March 2026 adds useful context but with a qualification that should give everyone pause. Software coding and customer service show roughly 30% productivity gains from AI, and those are real. But the same Goldman report found no meaningful link between AI adoption and economy-wide productivity. Only 10% of S&P 500 teams had quantified AI impact. Fewer than 20% of US establishments use AI for any business function at all. The inference economics opportunity is enormous. The market is still in its earliest stages of understanding what it is actually spending.

The M&A signal

The market narrative says the model moat has evaporated. The M&A activity in Q1 2026 confirms it in cash.

IBM wrote the biggest check: roughly $11 billion for Confluent, whose Apache Kafka-based data streaming infrastructure runs inside about 40% of the Fortune 500. Announced December 7, closed March 17, the deal was about one thing. As Rob Thomas, IBM's SVP of Software, put it: "Transactions happen in milliseconds, and AI decisions need to happen just as fast." Not a model acquisition. A plumbing acquisition.

Two days later, on March 9, OpenAI acquired Promptfoo, an open-source LLM evaluation and red-teaming framework used by more than 25% of the Fortune 500 and 350,000 developers. Promptfoo had raised an $18.4 million Series A from Insight Partners just eight months before. Sit with that for a second. The company most associated with frontier model development bought a safety and evaluation tool. If that is not an admission that value is migrating from the model to the infrastructure around it, what would be?

The next day, Meta acquired Moltbook, a social network for AI agents claiming more than 109,000 human-verified AI agents, and folded the founders into its Superintelligence Labs. Remember, Meta gives Llama away for free. What it paid actual money for was the agent coordination layer.

Specter AI's Value Chain Map, published January 2026, cataloged 353 companies representing more than $250 billion in cumulative private funding across six layers, from compute and models through vertical AI platforms. Roughly 125 raised rounds in 2025 alone. Capital is flowing not to model development but to infrastructure, integration, and governance. The layers that surround the models, not the models themselves.

IMAP Germany's AI Report, published February 2026, documented the structural repricing underway in software markets. Most vulnerable to AI disruption: workflow-heavy categories like sales and marketing, HR, collaboration tools. Most resilient: deeply embedded systems, ERP, security, vertical software. The report flagged DeepSeek and Claude as the two most significant repricing events, not because of model quality alone, but because of what their pricing and platform strategies imply for every company that built a business assuming intelligence would stay expensive.

The commodity trap and who avoids it

Gabe Goodhart, Chief Architect for AI Open Innovation at IBM, has been among the more candid voices on commoditization. In a January 2026 interview: "I do believe we're gonna approach a space where the models themselves are commoditized. You can pick the model that fits your use case just right and be off to the races." Pay attention to the phrasing. Goodhart is not predicting some future event. He is describing what is already happening.

The data backs him up. OpenAI's own financials, first reported by The Information in December 2025, showed compute margins climbing from roughly 35% in January 2024 to 52% by year-end, then approximately 70% by October 2025. OpenAI is getting more efficient at producing intelligence, which means prices have room to fall further still. The company remains unprofitable overall, weighed down by massive R&D and training costs, but the inference margin trajectory points one direction: intelligence as a utility.

Kevin Chung, Chief Strategy Officer at Writer, described the organizational shift bluntly: as customers deployed more sophisticated agents spanning departments, teams, workflows, it became clear you cannot build in isolation. The demand has moved from "give me a smarter model" to "give me the infrastructure to orchestrate agents across my entire organization."

That is the core tension of the AI economy in 2026. Model providers are locked in a pricing race to zero. Hardware providers are capturing margin on scarcity. And in between, a new class of company is emerging. Switchboards, wiring, compliance layers, cost optimization engines, data flywheels. Everything that makes the commodity input useful inside the enterprise.

A decision framework for the C-suite

For the CEO or CTO reading this: the strategic implications compress into five questions.

First, where is your AI abstraction layer? If you hard-coded a specific model provider into production, you are accumulating technical debt with every deployment. The companies that tested DeepSeek within hours of its release had abstraction layers. The ones that could not are now spending months on migration planning. Model-agnostic infrastructure is not a luxury. It is a hedge against a market where the best model changes quarterly.

Second, what proprietary data are you generating? Every AI interaction inside your organization produces data. Queries, responses, corrections, decisions, outcomes. If you are not capturing and structuring that into a proprietary knowledge graph, you are giving away the most defensible asset your AI deployment can produce. Glean is not valued at $7.2 billion because of its search algorithm. It is valued for organizational memory that no one can replicate.

Third, how deep is your workflow integration? The strongest moats belong to companies where AI is not a tool employees use but a colleague they depend on. Augment's Augie, Harvey's legal AI, Gong's revenue intelligence, same principle. When AI handles communications, decisions, and workflows across multiple channels, extracting it means rebuilding the operational architecture. The switching cost is not a contract. It is organizational disruption.

Fourth, where do you stand on compliance? The EU AI Act's high-risk provisions land in August 2026. Companies that already embedded compliance infrastructure (Vanta's continuous monitoring, Viz.ai's FDA clearance portfolio) hold a head start measured in years and audit history. Starting compliance work now means building under time pressure, with penalties of up to 7% of global revenue hanging over every deadline.

Fifth, do you have an inference budget? Training used to dominate AI spending. Now inference does, and if you are not tracking token consumption by department, routing queries to the right model tier, and measuring cost-per-outcome instead of cost-per-token, you are almost certainly burning money. Enterprises that implemented intelligent routing report 30 to 85% cost reductions with minimal quality impact. Everyone else is running zombie agents. They look impressive in quarterly reviews. They quietly drain the AI budget.

What to watch

Five signals worth tracking over the next six months.

👉 Salesforce Agentforce ARR. Analysts project $800 million by Q4 FY2026. Hit that number and orchestration-layer monetization is validated at enterprise scale. A stall would suggest model interchangeability is eroding Agentforce's pricing power faster than expected.

👉 EU AI Act first enforcement actions. The high-risk provisions become binding on August 2. First penalties will reveal whether compliance infrastructure companies like Vanta and Drata captured the market or in-house teams absorbed the work. Watch who announces CE marking first.

👉 Anthropic's run-rate trajectory. Fourteen billion in annualized run rate by early 2026. Sustaining that through Q3 validates orchestration-over-model. Deceleration would suggest the model layer still commands more value than the infrastructure play implies.

👉 Inference-to-training spend crossover. Deloitte projects inference will represent two-thirds of all AI compute by year-end. If enterprise CFOs start publishing inference budgets as a separate line item, routing and optimization tools become the fastest-growing infrastructure category in AI.

👉 IBM-Confluent integration milestones. IBM paid $11 billion for real-time data streaming. First joint product announcements will signal whether the data-velocity thesis translates into enterprise AI agent deployments or stays an expensive hedge on a future that has not yet arrived.

The switchboard thesis

The intelligence arbitrage is closing. The gap between frontier models and commodity models, which once justified premium pricing and investor enthusiasm, has narrowed to the point where it matters only for the most demanding applications. Classification, extraction, summarization, routing, basic reasoning: roughly 80% of enterprise AI use cases. All of them can now run on models costing two to three orders of magnitude less than they did in 2023.

That does not make AI a bad investment. It means the investment thesis has shifted. The companies capturing durable value in 2026 are not building the best generators. They are building switchboards, wiring factories, redesigning floor plans. Orchestration layers. Proprietary data flywheels. Deeply integrated workflow platforms. Compliance infrastructure. Inference optimization engines. Five lanes. That is where defensible margin is being constructed.

The electricity parallel holds in one final respect. The companies that won the electric age were not, by and large, the electric utilities. GE and Westinghouse mattered, sure. But the real wealth creation happened at Ford, which redesigned the factory from scratch, and at the thousands of manufacturers that figured out how to use distributed motors to build products steam could never have supported. Utilities provided the commodity input. Switchboard companies captured the margin.

In the AI economy of 2026, intelligence is the commodity input. The question is not whether your company uses AI. It is whether you are building the switchboard or still optimizing the generator.

By current market estimates, the answer to that question is worth somewhere between $250 billion in private capital and $2.52 trillion in annual spending. The arbitrage window is closing. The infrastructure window is wide open.

Key Takeaways

- Model-agnostic infrastructure is a hedge, not a luxury: Companies with abstraction layers tested DeepSeek within hours of its release; those without face months of migration planning and millions in accumulated technical debt.

- Orchestration is the new distribution: Anthropic, NVIDIA, and Salesforce all pivoted from model quality competition to workflow control, and the Q1 2026 M&A pattern confirms the migration is structural.

- Compliance moats compound with time: Every new regulation, every audit cycle, and every FDA clearance adds to the switching cost. Companies that embedded compliance tooling early hold head starts measured in years, not features.

- The HALO thesis has a blind spot: Hardware appreciates and models commoditize, but the middle layer of orchestration, integration, and governance is where enterprise margin concentrates.

- Behavioral lock-in beats contractual lock-in: When AI becomes a colleague handling communications, decisions, and workflows across multiple channels, the switching cost is organizational disruption, not a termination fee.

- Inference is the new FinOps discipline: The training-to-inference spend ratio has flipped, and enterprises routing 70-80% of queries to commodity models report 30-85% cost reductions with minimal quality loss.

- The Q1 2026 M&A signal is definitive: IBM bought data velocity ($11B), OpenAI bought security tooling, and Meta bought agent coordination. None acquired a model company.

Sources & Further Reading

Primary Sources

- MIT FutureTech, "The Price of Progress: Algorithmic Efficiency and the Falling Cost of AI Inference" (NeurIPS 2025 / ArXiv 2511.23455)

- Goldman Sachs, "The HALO Effect: Heavy Assets, Low Obsolescence in the AI Era" (February 2026)

- Paul David, "The Dynamo and the Computer: An Historical Perspective on the Modern Productivity Paradox" (American Economic Review, 1990)

- Federal Reserve Bank of San Francisco, Economic Letter (February 2026)

- EU AI Act enforcement timeline and requirements

Research & Analysis

- Gartner, Global AI Spending Forecast (January 2026)

- Deloitte, 2026 TMT Predictions: The Inference Crossover

- Foundation Capital, "The Context Graph" (December 2025)

- IMAP Germany, AI Report (February 2026)

- Specter AI, Value Chain Map: 353 companies, $250B+ cumulative funding (January 2026)

- RouteLLM, UC Berkeley & Canva: Intelligent model routing research

- Model Context Protocol specification, Anthropic / Linux Foundation

- CloudBees, DevOps Migration Index (2025)

Further Reading

- Is AI Eating Software? The Moat Is Moving Down the Stack

- OpenAI Launches Frontier to Manage AI Agents From Rival Vendors in One System

- GitHub Won't Compete on Agent Quality. It's Building the Layer Beneath All of Them

- DeepSeek Cuts Inference Costs by 10x

- Anthropic Ships Frontier-Class Coding at Commodity Prices

IMPLICATOR

IMPLICATOR